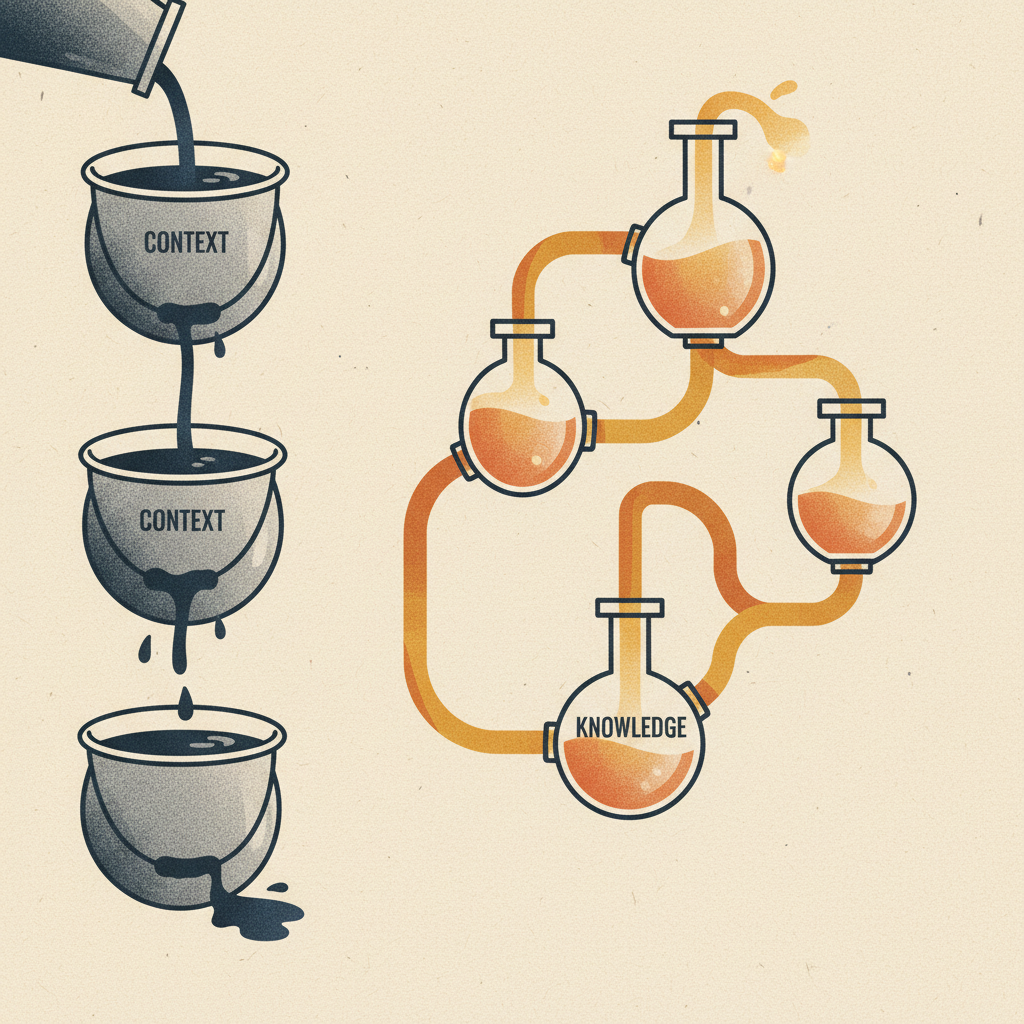

Every person on your team is destroying value right now. The product manager prompts Claude, copies the output, pastes it into Notion, then Slacks the product designer. The product designer opens a fresh AI session with zero inherited context. The engineer starts a third session - tabula rasa. Three people using AI. Three completely isolated knowledge graphs. That is insanity.

Picture this. Your PM finishes a customer call. Before she even closes her laptop, the insight has flowed into a shared layer where the designer's AI is already weaving it into a flow exploration, and the engineer's AI is already pressure-testing it against the architecture. Nobody forwarded a Slack message. Nobody scheduled a sync. The work just kept moving. That isn't a futuristic vision. That's what one connected workspace looks like the day you wire it together.

The cost of this isn't "inefficiency." It's compounding information loss at every single handoff. Each copy-paste is a context tax. Each re-prompt from scratch is value destruction. And nobody is accounting for it on any balance sheet. Multiply 30 handoffs a day by 10 team members by 250 working days - that's 75,000 micro-context-losses per year that show up nowhere in your P&L but quietly drag every output toward generic.

Do the maths in dollars. If the average fully-loaded cost of a knowledge worker on your team is $150K per year, every hour of context assembly costs you about $75. A team of 10 losing 90 minutes per day to copy-paste, re-prompting, and "can you send me that doc?" requests is burning around $280K per year on work that produces exactly zero value. That's a senior hire's entire compensation. Gone. Quietly. Every year. And the AI you're paying for? It's barely scratching the surface of what it could do because it never sees the full picture. You're paying twice and getting half.

┌─────────────────────────────────────────────────────────────────────────────────┐

│ │

│ ● HOW MOST TEAMS USE AI ● HOW A 100x TEAM COLLABORATES │

│ │

│ Parallel monologues: Shared brain: │

│ │

│ ┌─────┐ ┌─────┐ ┌─────┐ ┌─────────────────────────────┐ │

│ │ PM │ │ Des │ │ Eng │ │ SHARED CONTEXT LAYER │ │

│ │ ↕ │ │ ↕ │ │ ↕ │ │ │ │

│ │ AI │ │ AI │ │ AI │ │ PM ←→ ┌────┐ ←→ Design │ │

│ └──┬──┘ └──┬──┘ └──┬──┘ │ │ AI │ │ │

│ │ │ │ │ Eng ←→│ │←→ Ops │ │

│ ↓ ↓ ↓ │ └────┘ │ │

│ copy/paste copy/paste starts │ All read from + write to │ │

│ to Notion to Slack from zero │ the same knowledge base. │ │

│ └─────────────────────────────┘ │

│ PM's AI doesn't know │

│ Eng constraints. PM's insight enriches │

│ Des AI doesn't know Des AI automatically. │

│ user research. Eng constraints flow to │

│ Eng AI doesn't know Product without a meeting. │

│ the brief. Nothing lost in translation. │

│ │

│ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ ─ │

│ │

│ CAREER IMPACT CAREER IMPACT │

│ You're a copy-paste bridge You're connected to the │

│ between disconnected tools. full team's intelligence. │

│ Context dies at every Every colleague's work │

│ handoff. makes your AI smarter. │

│ │

│ │

│ BUSINESS IMPACT BUSINESS IMPACT │

│ Meetings exist to share Context flows without │

│ context that should already meetings. "That's not │

│ be shared. Double overhead. technically feasible" → 0. │

│ │

│ │

└─────────────────────────────────────────────────────────────────────────────────┘

The 100x Individual

Here's the thing. Your collaboration surface is your knowledge infrastructure. Every tool you touch - editor, design tool, project tracker - should read from and write to the same context layer. Right now they are all isolated databases. You are paying the transaction cost of bridging them manually, dozens of times a day, and that cost compounds.

A design engineer we work with wired his entire stack through MCP integrations. Claude reads his Figma component library, his GitHub repo structure, his Notion design principles, his Linear backlog. When he asks for a component, the AI already knows his design system, his code patterns, his current sprint priorities. Zero copy-paste. The tools share a brain. The ROI on that two-hour setup? He estimates 6-8 hours saved per week. Every week. Compounding.

A product manager connected her research corpus, competitive analysis, and user interview transcripts to the same context layer. When she prompts for a brief, the AI draws on everything she has ever learned about her users - not the 10% she remembers to paste into the prompt window. That delta between 10% and 100% context? That is where the real alpha lives. A single strategy doc that used to take her four hours of context assembly now takes 40 minutes. Same judgment, same quality, one-sixth the time. She reinvests the savings into the hardest problems on her roadmap - the ones that never used to get enough thinking time because context assembly was eating the budget.

A founder connected pitch materials, customer data, financial models, and strategic memos. Investor deck for a Series A growth-stage fund? The AI pulls the right metrics automatically. Deck for a strategic corporate partner? Different context, zero extra work. She derisked the highest-stakes communications of her company.

An engineering lead connected architecture docs, ADRs, incident postmortems, and deployment configs. When a new engineer asks "why did we build it this way?" - the AI answers with the team's actual decisions and tradeoffs. Not generic Stack Overflow patterns. That is the difference between a $200K hire ramping in two weeks versus two months.

A clinical leader connected care protocols, patient population data, and outcome patterns. Every clinical tool draws from the same source of truth. No drift between what the protocol says and what the team does.

A marketing lead wired her competitive intel tracker, brand guidelines, past campaign post-mortems, and customer segmentation data into one context layer. Every piece of copy her AI drafts now references which angles have been tried, which headlines converted, which objections the sales team heard last week. The gap between her first draft and her final draft shrank from six revisions to one. That is not efficiency. That is her accumulated marketing instinct suddenly running at the speed of software.

A sales engineer connected his solution architecture docs, objection-handling playbook, and competitive battlecards. When a deal comes in for a complex integration, the AI already knows the technical constraints, the historical objections, and which reference customers to cite. The "let me get back to you" response - the single biggest deal-killer in enterprise sales - dropped by 70%. That is a pipeline conversion story the CFO can read on one line.

Imagine if you never had to "send the doc" again. Imagine if every tool you opened tomorrow already knew what you decided yesterday, what your team agreed last week, what your customers said last month. The mental load you currently spend remembering where things live - gone. The cognitive context-switching tax - gone. What would you do with the brain space you'd reclaim? That alone is a career-altering question.

The practical setup: Pick your 3 most-used tools. Connect each to your knowledge base via MCP or API. Start with read access - let AI reference your existing work. Then add write access - let AI contribute back. Total investment: one afternoon.

How to connect your first 3 tools this afternoon: (1) Notion → Claude: Install the Notion MCP server, authorize your workspace, and Claude can now query every page, database, and document in your workspace. (2) GitHub → Claude Code: Add a CLAUDE.md file to your repo root with your architecture patterns and constraints - Claude Code reads it automatically. (3) Slack → your knowledge base: Set up a Zapier flow that watches key Slack channels and deposits summarized decisions into a Notion database daily. Three connections. One afternoon. Every AI interaction from tomorrow draws from the same context layer. Expected payoff: 5-10x that time back every single week - and those reclaimed hours don't just mean shipping more. They mean time for deep thinking, for the hardest most interesting problems in your domain, for the craft that actually differentiates your work from everyone else's.

The shift is from "I use AI in this tool" to "AI flows across all my tools." Your design context informs your code generation. Your code constraints inform your design suggestions. Your customer research informs both. Your clinical protocols inform operations. Like a poker player who can see every card on the table - the network effects on knowledge kick in the moment the walls come down. Full stop.

The 100x Team & Business

Think about it - at the team level, collaborative spaces mean shared surfaces where humans and AI contribute with full context. The economics here are staggering.

Most teams run two parallel worlds: the human world (Slack, meetings, docs) and the AI world (individual prompt sessions). Information flows between them through copy-paste, manual summaries, and meetings where someone explains "what the AI said." Net-net, this doubles communication overhead instead of cutting it. You are paying for AI and then paying again to manually distribute its output. That is like buying a dishwasher and then hand-washing every plate before you load it.

What's stopping you from collapsing those two worlds into one this quarter? Not the tools - they exist and most of them are free or near-free. Not the talent - any reasonably technical person can wire MCP integrations in a weekend. The blocker is almost always organizational: the comfort of working in tabs the way you've always worked. The teams that break that comfort are the teams that compound. The ones that don't end up paying the context tax forever.

The punchline is this: shared workspaces where human contributions and AI contributions live side by side, drawing from the same knowledge base. A product brief starts as an AI draft grounded in persona research and competitive context. The PM refines the judgment calls. The product designer adds UX direction and visual strategy. The AI incorporates those inputs into the next iteration. The engineer flags technical constraints that feed back into the design. Everyone sees the full history. Zero information loss. That is network effects on knowledge - every participant makes the shared context more valuable for every other participant.

How to build a shared context layer for your team: Create a single Notion workspace (or Confluence space) structured by function - Product, Engineering, Design, Operations - where each team deposits insights in a consistent format. Connect this workspace to Claude via MCP. Now when the PM asks Claude "What engineering constraints apply to the notifications feature?" the answer draws from engineering's actual documented decisions. When the designer asks "What did users say about onboarding?" the answer draws from the PM's customer research. No meetings required. The workspace is the shared brain.

One team deployed custom interfaces for three groups: business, clinical, and operations. All three read from and write to the same central platform. When the business team updates a customer insight, the clinical team's AI immediately has that context for care decisions. When operations identifies a workflow bottleneck, the engineering team's AI factors it into sprint planning. When a clinician documents a protocol adjustment, it flows to ops automatically. No meetings. No Slack threads. No "can you send me that doc?" The marginal cost of sharing context dropped to zero. And the meetings that disappeared? That time went to the work that actually makes a difference - deep clinical thinking, hard operational problems, strategic decisions that had been perpetually deferred. The speed of context-sharing isn't just about moving faster. It's about unlocking time for the deep craft and hard problems that no meeting could solve anyway.

The critical design principle: AI is a participant in your system, not a replacement for your team. It surfaces relevant context when the team needs it - "Based on the last 3 customer calls, this feature request aligns with a pattern in the enterprise cohort." The PM decides what to do with that insight. The AI extends the team's reach. The knowledge store carries the team's accumulated intelligence. But the humans direct, decide, and refine. You're not outsourcing your team's intelligence to AI. You're empowering agents to build with your team - as an extension of your collective skills. That is how you deploy intelligence at scale.

Picture this. A new hire starts on Monday. By Tuesday afternoon, her AI has access to every customer interview the research team has conducted, every architecture decision the engineers have made, every design principle the product team has debated, every campaign the marketing org has shipped. She asks her first real question and gets an answer grounded in the accumulated judgment of her entire team. No shadowing. No "let me introduce you to the person who knows this." No two-month onboarding purgatory where she's billable in theory but contributing nothing in practice. The only thing standing between you and that onboarding experience for your next hire is whether your tools share a brain. The tools already exist. The only question is whether you will spend a single afternoon wiring them up.

Where This Applies

A product team built a shared research surface. Customer interviews, competitive analysis, usage data - all feeding one AI-accessible layer. When any team member asks a product question, the AI answers with the full research context. Not the 15% slice one person happens to remember. The information asymmetry inside their own team dropped to near zero. That alone eliminated two weekly syncs.

A clinical team built a patient context surface. Every interaction - calls, notes, assessments - feeds a shared view. Before any patient encounter, the AI assembles the complete picture. No one walks in cold. No one asks the patient to repeat information. Care quality went up measurably. Think of it like a quarterback getting the full playbook instead of one page at a time.

A distributed engineering team built a shared architecture surface. Technical decisions, ADRs, deployment patterns, incident learnings - one AI-accessible layer. New team members get answers grounded in actual decisions, not generic patterns. Onboarding time dropped by 40%. At $180K average fully-loaded eng cost, that is real money.

An operations team built a shared workflow surface. Every process decision, exception pattern, and vendor interaction feeds one system. New hires deploy with operational guidance grounded in the team's actual experience. The "ask the senior person" bottleneck - the single most expensive knowledge constraint in any scaling org - disappeared.

A product design team connected Figma, GitHub, Notion, and Linear through shared context. The walls between design and engineering dissolved because the AI carries context across both domains. The "that's not technically feasible" feedback loop - which used to cost 3-5 days per cycle - dropped to near zero.

A customer success team connected their CRM, product analytics, and support ticket history into a shared context surface. Every time a CSM opens an account, their AI already knows the usage trends, the open tickets, the contract renewal date, and which feature requests the customer has championed. The "let me pull up the account" dance that used to eat the first five minutes of every call just vanished. Multiply that by 40 calls a week across a 6-person team and you have recovered a full working week, every single week, for higher-value conversation.

Think about it like this. The best sports teams in the world don't win because their players are individually better. They win because the players read the same plays, see the same field, anticipate each other's moves. Your team is already talented. The question is whether your tools let them play as a unit or force them to play as five individuals on the same court. Connected context is the play-calling system. Without it, every possession starts from scratch.

And here is the uncomfortable truth about team AI adoption: most of the productivity gains you read about in case studies come from the shared context layer, not from the AI model itself. The teams showing 3x and 5x output gains aren't using smarter models than you. They're feeding the same models a dramatically richer context. Same tools, different leverage. The alpha was never in the technology. It was in the wiring.

Here's the thing: collaboration scales when context is shared, not when meetings are more frequent. Every meeting that exists solely to transfer information is a tax on your organization. Build the shared surface. Let AI and humans both contribute - with humans directing and AI extending. The value is the triad: your people, your shared knowledge, and AI working together. You're not handing collaboration to AI. You're making your team's collaboration compound through AI. That is how you compound team intelligence.

Examples How Others Have Made This Real

These aren't hypotheticals. Real teams are building shared context layers where humans and AI collaborate - and the tools to do it exist today.

Notion + MCP integrations - teams connect their entire Notion workspace to Claude, creating a shared brain that every team member's AI draws from. The PM's customer research enriches the designer's AI. The engineer's technical constraints flow to product automatically. One knowledge base, many AI consumers. Zero copy-paste.

Linear + Slack + Claude - product teams connect their issue tracker and communication channels into a shared context layer. When anyone on the team prompts AI, it already knows the backlog state, recent discussions, and customer feedback. No one starts from zero. The "can you send me that doc?" overhead drops to near zero.

Figma + GitHub + Cursor - design engineering teams connect their design system, codebase, and project context through shared integrations. When a developer asks AI for a component, it references the actual Figma tokens and the actual code patterns. The wall between design and engineering dissolves because the AI carries context across both.

Dust.tt provides team-level AI workspaces where multiple people's AI agents draw from the same connected data sources - Notion, Slack, GitHub, CRM, Google Drive. When the PM's agent produces a brief, the engineer's agent can reference it with full context automatically. That's a shared brain, not parallel monologues.

Granola captures meeting context and makes it available to the entire team's AI tools. A sales call insight is immediately available to the PM's AI. A product decision from a design review reaches engineering's AI without anyone forwarding a Slack message. Context flows as a byproduct of having meetings - no extra work.

Microsoft's Copilot ecosystem connects Word, Teams, Outlook, and SharePoint into one shared context layer. When a team member prompts Copilot, it draws from shared documents, meeting transcripts, and email threads. The vision - even if execution is still maturing - is exactly right: AI should have the full team's context, not just one person's tab.

Vercel's AI SDK lets engineering teams build shared AI interfaces where multiple tools and data sources feed into one context layer. Custom dashboards where business, engineering, and operations all read from and write to the same AI-accessible system. The shared surface pattern, implemented as infrastructure.

Ask Yourself

These questions reveal whether your team is actually collaborating with AI - or just using AI next to each other.

Do your AI tools share a brain - or are they all silos? When your product designer prompts AI, does it know about the PM's customer research? When your engineer prompts, does it reference the design system? If every tool starts from zero context, you have parallel monologues - not collaboration. See how shared surfaces dissolve walls →

Where are your coworking spaces for AI and teams? Not Slack channels. Not meeting rooms. Shared digital surfaces where both humans and AI contribute with full context. Where a PM's insight enriches the product designer's AI automatically. Where an engineer's constraint flows to product without a meeting. Do you have these spaces? Or is information still flowing through copy-paste?

Can multiple agents and team members all work on the same thing seamlessly? The real test: start a product brief. Can the PM's AI draft it, the product designer's AI reference it with full context, and the engineer's AI flag constraints - without anyone manually bridging between them? If not, your tools are disconnected. Explore how the stack connects →

How many meetings exist solely to share context that should already be shared? Every standup where someone explains "what the AI said." Every sync where someone re-establishes context. Those meetings are symptoms of disconnected tools, not collaboration needs. What percentage of your meetings would vanish if context flowed automatically?

What's your team's knowledge contribution loop? When someone learns something - from a customer call, a design decision, a code review, a clinical encounter - does that knowledge enrich the system? Or does it stay in their head until someone asks? See how the knowledge moat compounds →

Which 3 tools would you connect first? Pick the three your team uses most. Now imagine they share the same context layer via MCP. What changes? That's your starting point. Explore the integrations →